Evidence-first by design

Every run records commands, exit codes, and before/after output in `artifacts/evidence.json` and `artifacts/evidence.md`.

Evidence-first AI bugfix bot

efix turns a failing reproduction command into a pull request with a minimal patch, test updates, before/after evidence, and explicit rollback notes. It is designed for teams that want auditability, not just plausible diffs.

What teams care about

PR body includes proof, not just patch text.

Policies are enforced in code, not left to prompt compliance.

Starts as a GitHub Action, so teams can pilot without a platform migration.

Positioning

Most coding agents optimize for patch generation. efix optimizes for reviewer confidence: reproducible failures, enforced test expectations, bounded changes, and an evidence artifact trail.

Every run records commands, exit codes, and before/after output in `artifacts/evidence.json` and `artifacts/evidence.md`.

Mandatory tests, diff limits, and command allowlists are checked by the orchestrator. The model does not get to skip guardrails.

Start with `workflow_dispatch` or `/efix` comment triggers. No new hosted control plane is required for a pilot.

How it works

MVP note: semantic verification is still limited; current `verify()` summarizes command pass/fail while the policy layer enforces test presence and command execution evidence.

Operator trust model

The project already added core architecture/getting-started docs. The next docs pass should add dedicated, easier-to-find coverage for authorization rules, fork rejection, “repro command must fail” behavior, scoped staging edge cases, and an operator/security trust model page.

Read hardening process notesTroubleshooting fast path

These are the high-friction cases worth surfacing on the website and in future docs so teams can self-serve.

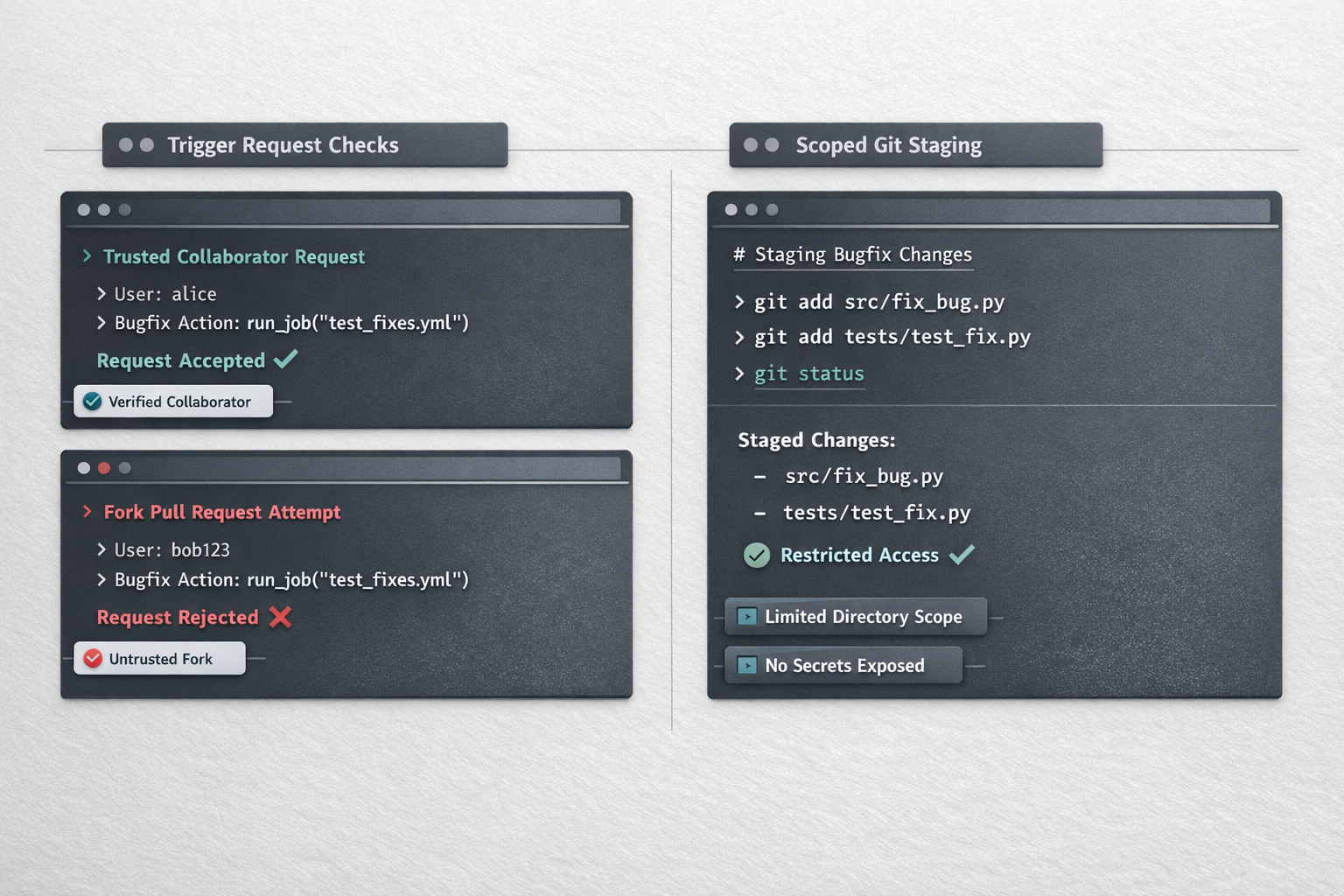

If the comment author is not a trusted collaborator association, the workflow should decline the request and post a clear explanation instead of attempting a run.

What to tell operators

Add the user as a collaborator/member or use `workflow_dispatch` for a supervised run.

Current workflow restricts comment-triggered runs to same-repo PR heads. This prevents secret exposure and reduces the blast radius of untrusted branches.

What to tell operators

Reproduce the issue on a same-repo branch or run a manual workflow with explicit oversight.

efix expects a failing signal. If the command already passes, the run should stop and explain that it lacks a baseline failure to fix and prove.

What to tell operators

Provide a narrower failing test command or a minimal repro script that exits non-zero.

The workflow stages only expected code and artifact paths. Files created elsewhere during repro/verify will not be committed.

What to tell operators

Move intended generated outputs into approved paths or extend staging rules deliberately.

Plans

Start with a low-risk solo trial, move into a supervised team pilot, and upgrade to a procurement-ready enterprise plan when you need identity, audit, and contracting support. Pricing is seat-based with pooled run quotas and explicit overage caps.

Solo entry

$9/mo

14-day free trial, then $9 monthly or $90 annually

Overage is off by default. Opt in for +25 runs at $10 and set a monthly spend cap.

Recommended

$24/dev/mo

$30 month-to-month or $24 annual (per billable seat)

Overage is opt-in only: +50 runs for $15, billed only until your configured monthly cap.

Contact sales

Custom

Annual contracts with custom seats, quotas, and rollout support

Best for multi-repo rollouts, procurement review, and teams that need contracting support.

For benchmark comparisons and price anchors, see the pricing FAQ below.

How to get started

efix works best as a supervised pilot first. Start with one repository, a small set of trusted operators, and a narrow `commands.allow` policy.

mkdir -p /path/to/repo/.github/workflows

cp /path/to/veri-pr-agent/.github/workflows/efix.yml \

/path/to/repo/.github/workflows/efix.yml

# Copy efix source into the repo (MVP colocated layout)The current MVP expects `src/cli.ts` and the rest of the efix source to exist in the same repository.

cp /path/to/veri-pr-agent/.efix.yml.example /path/to/repo/.efix.yml

Then add OPENAI_API_KEY in GitHub Actions secrets and review commands.allow,

diff.max_files, and test.required_suites for your repo.

efix-artifacts artifact.If the repro command already passes, efix has no baseline failure to prove against.

/efix npm test -- --testNamePattern "auth rejects invalid token"FAQ

Trust increases when constraints are explicit. These are product truths worth saying up front.

Not yet. The current positioning is beta/dogfood. The value is strongest in supervised team workflows where reviewers inspect evidence artifacts and PRs before merge.

Partially. MVP verification is command-based and policy-based. Deeper semantic verification is a v1 area.

Launch pricing is positioned below premium automated bugfix/review tools while staying above general coding assistant baselines. The benchmark table is kept here for transparency, not as the primary customer-facing plan presentation.

| Product | Observed price | Why it matters |

|---|---|---|

| GitHub Copilot Business | $19/user/mo | General coding assistant baseline in many teams |

| CodeRabbit Pro | $24/user/mo annual or $30 monthly | Closest AI PR/review automation anchor |

| Greptile Enterprise | $30/developer/mo (minimum 15) | Higher-trust codebase reasoning / enterprise posture |

| Graphite | $20 Starter, $40 Team per seat/mo | PR workflow tooling with team-level review workflows |

| Cursor Bugbot add-on | $40/user/mo | Premium automated bug review/fix positioning anchor |

Benchmark snapshot (2026-02-25). Verify current vendor pricing before customer-facing quotes or procurement decisions.

Early pricing should optimize for adoption, pilots, and case-study generation. Once success-rate evidence and cost telemetry are mature, pricing can move upmarket.